LLM-Assisted Agentic Edge Intelligence Framework

Agentic AI, Industrial IoT, Edge Device, Edge Intelligence, Large Language Models, Resource Management, Context-aware Orchestration, Small LLM

Reference: Dehury, C.K., Kushwaha, S.S., Zhang, Q., Saleh, A., & Donta, P.K. (2026). LLM-assisted Agentic Edge Intelligence Framework. arXiv:2604.09607v1 [cs.DC]. Indian Institute of Science Education and Research Berhampur / Peking University / University of Oulu / Stockholm University.

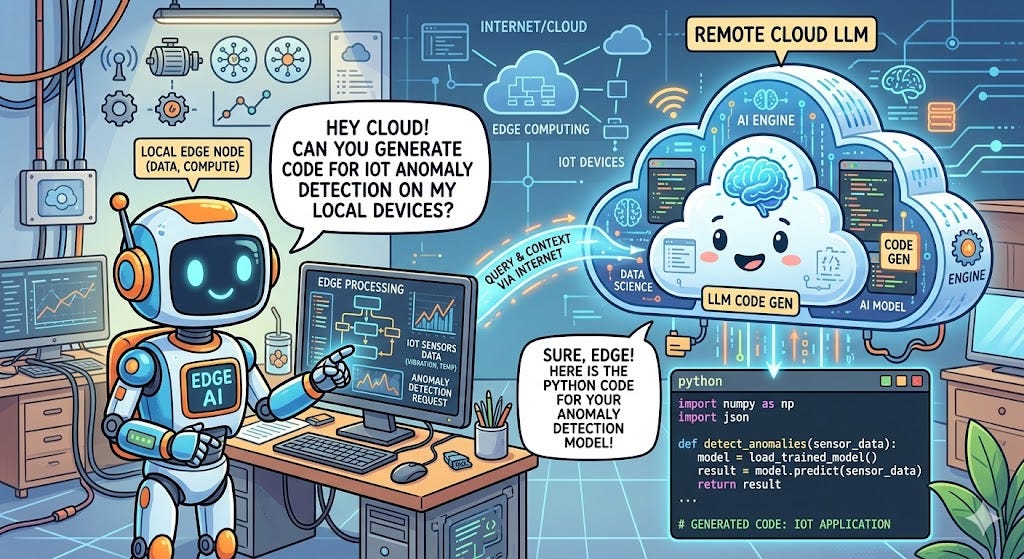

The paper introduces the LLM-assisted Edge Intelligence (LEI) framework, a novel agentic architecture that addresses a critical gap in Industrial IoT and edge computing deployments: the inability of static, hard-coded edge analytics to autonomously adapt to changing data distributions, new sensing modalities, and evolving operational goals without manual redeployment. The LEI framework leverages cloud-hosted Large Language Models (LLMs) as cognitive orchestrators to dynamically generate, validate, and deploy lightweight Python programs onto resource-constrained edge devices — eliminating the need for manually specified business logic.

Problem Statement

Edge devices in Industrial IoT environments (e.g., Raspberry Pi nodes in manufacturing, energy, or environmental monitoring) are typically resource-constrained with limited CPU, memory, and storage. Deploying LLMs directly on such devices is infeasible due to their computational demands. Conversely, existing edge analytics pipelines rely on predefined rules and models that cannot self-adapt when data patterns shift — a phenomenon known as data shift — or when new sensors are introduced. This creates a scalability bottleneck requiring continuous human oversight, increasing maintenance overhead and operational costs.

Technical Architecture

The LEI framework is structured around two primary domains: the Edge Execution Layer and the Cloud Computing Environment. The edge layer integrates a Sensor Interface, Data Monitor, Data Normalizer, Resource Stat monitor, LEI Core, Validator, Scheduler, Intelligence Driver, Intelligence Repository, and Intelligence Publisher. The cloud layer hosts the LLM (accessed via OLLAMA framework with models <10B parameters such as qwen2.5-coder:7b, deepseek-coder:6.7b, phi3:3.8b, and llama3.1).

The pipeline operates in four sequential steps:

Task Generation — the LEI Core aggregates sample data, metadata, context, and device resource statistics into a structured prompt dispatched to the cloud LLM, which returns a JSON task list;

Code Generation — the LLM synthesizes resource-aware, lightweight Python scripts for each task in batches;

Validation — generated code is executed locally against sample data with up to 2 correction attempts via LLM feedback loops;

Execution & Visualization — the Scheduler runs validated scripts on live raw data streams, with results published via dashboards or persistent storage.

Experimental Evaluation

The framework was evaluated on four heterogeneous IoT datasets — Air Quality (PM2.5, NO2, O3), Temperature & Humidity, Soil Moisture, and Wind Speed/Direction — using two Raspberry Pi models (Pi 4B and Pi 5) and 8 LLM backends. Key findings:

average CPU utilization remained below 3% across all pipeline steps;

memory usage stabilized between 4.5–6.0%;

qwen2.5-coder:7b achieved the highest throughput (~300–400 prompt tokens/s); and system reliability (code validation success rate) exceeded 85% across most model-dataset combinations.

The framework maintained low-latency, resource-efficient operation while autonomously adapting analytics logic without human intervention.

Code

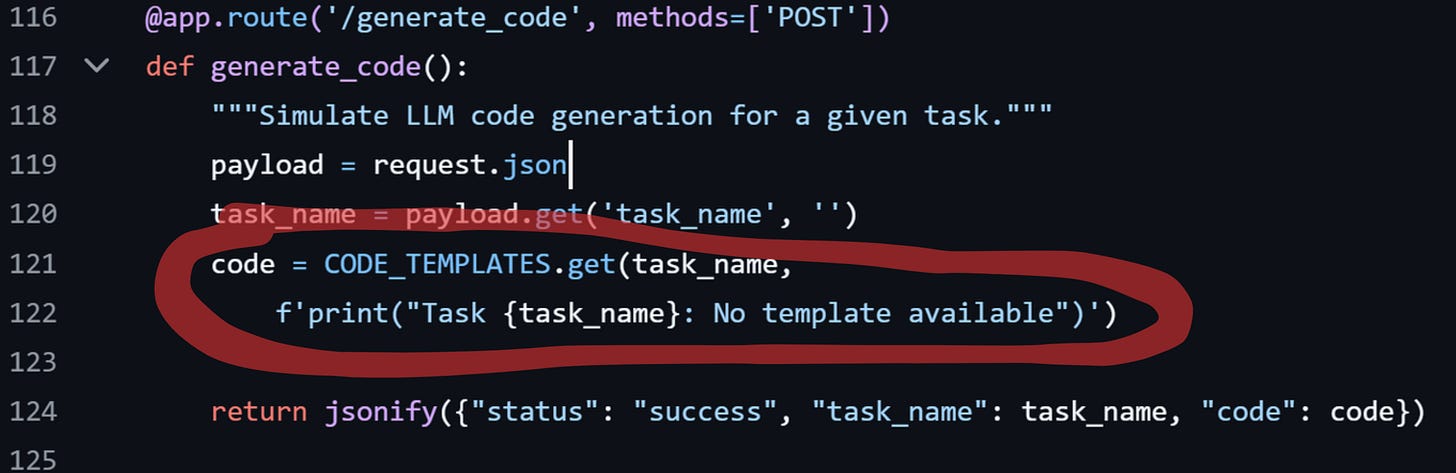

To the following link you can find an easy demonstrator (without LLM and just fake generator):

git clone https://github.com/venergiac/iiot-LEI-demonstrator

python generate_data.py

docker-compose up --buildTo attach your Ollama or any of your LLM replace it with

from ollama import chat

from ollama import ChatResponse

response: ChatResponse = chat(model=’gemma3’, messages=[

{

'role': 'user',

‘content’: payload.get("task_description"),

},

])

code = response[’message’][’content’]Significance

The LEI framework represents a paradigm shift from static edge analytics to self-organizing, LLM-orchestrated edge intelligence — a foundational building block for next-generation Industrial IoT systems, Artificial Intelligence of Things (AIoT), and autonomous inspection platforms. Its resource-aware design makes it deployable on commodity edge hardware, democratizing adaptive AI for manufacturing, energy management, and predictive maintenance applications.

Author’s References

Agentic AI on Industrial Dashboards: Veneri et al. (2025), “Agentic AI on Customer Dashboards in Remote Monitoring and Diagnostic Services” (ResearchGate: 397207684) — both papers address autonomous AI agents for industrial monitoring.

Edge-Deployed AI & Knowledge Distillation: Veneri et al. (2023), “Revamping Bolt Inspection in Oil and Gas Industry: Edge-Deployed Robotic Machine Vision Model applying Knowledge Distillation” (ResearchGate: 373315659) — edge deployment of AI models on constrained hardware.

Virtual Sensors & Anomaly Detection: Veneri et al. (2023), “Sensor Virtualization for Anomaly Detection of Turbo-Machinery Sensors” (ResearchGate: 372657709) — virtual sensing and adaptive analytics align with LEI’s dynamic code generation for sensor streams.

Continual Learning: Veneri et al. (2024), “Anomaly Detection of Sensor Measurements During a Turbo-Machine Prototype Testing — An Integrated ML Ops, Continual Learning Architecture” (ResearchGate: 378540962) — continual adaptation to data shift is a shared theme.

Industrial IoT Book: Veneri, G. & Capasso, A. (2024), Hands-On Industrial Internet of Things: Build robust industrial IoT infrastructure by using the cloud and artificial intelligence, 2nd Ed., Packt Publishing — foundational IIoT architecture reference.

Patent — Virtual Sensor: Veneri et al. (2023), “Method of generating an output signal from a virtual sensor of an industrial machine with reliability/confidence check” (ResearchGate: 385249048) — reliability-checked AI outputs on industrial machines.